AI Operations (AIOPS)

Diagnosing why AI initiatives stall —

and how leaders can move decisions forward

This is an ongoing series examining how organisations move AI from pilot to production. Each section focuses on a specific decision gate where work gets stuck, the failure patterns behind it, and the artefacts that unblock progress.

The Weekly Signal

Diagnosing the failure patterns behind the

AI pilot → production trap

Featured Diagnostic

Jeanswest’s AI Ads: Experimenting with AI, or Letting AI Experiment with Your Brand?

Customers called it "AI slop". The real issue wasn't the aesthetic but the absence of authority. Here is why approving public-facing AI without explicit bounds is a governance failure.

Contact | Join

Get essays, public diagnostics, and invitations to small, focused sessions on operationalising AI straight to your mailbox.

Diagnostic Series

A weekly series diagnosing why AI initiatives stall — and how leaders unblock decisions by clearly bounding risk.

Title

Sub-title

Text

List

Text

List

Sublist

Text

Text

Text

List

Text

List

Text

About the author

Vinesh Prasad works with organisations on AIOPS – Operationalising AI. His work focuses on identifying the decision gates that block progress, diagnosing failure patterns, and converting expert judgement into signable operating artefacts.This article is part of an ongoing series on operationalising AI beyond pilots. If this problem sounds familiar, you can fill out the contact form or DM him on LinkedIn.

“I’m not a robot”:

When blocking AI agents means losing real customers

In Brief

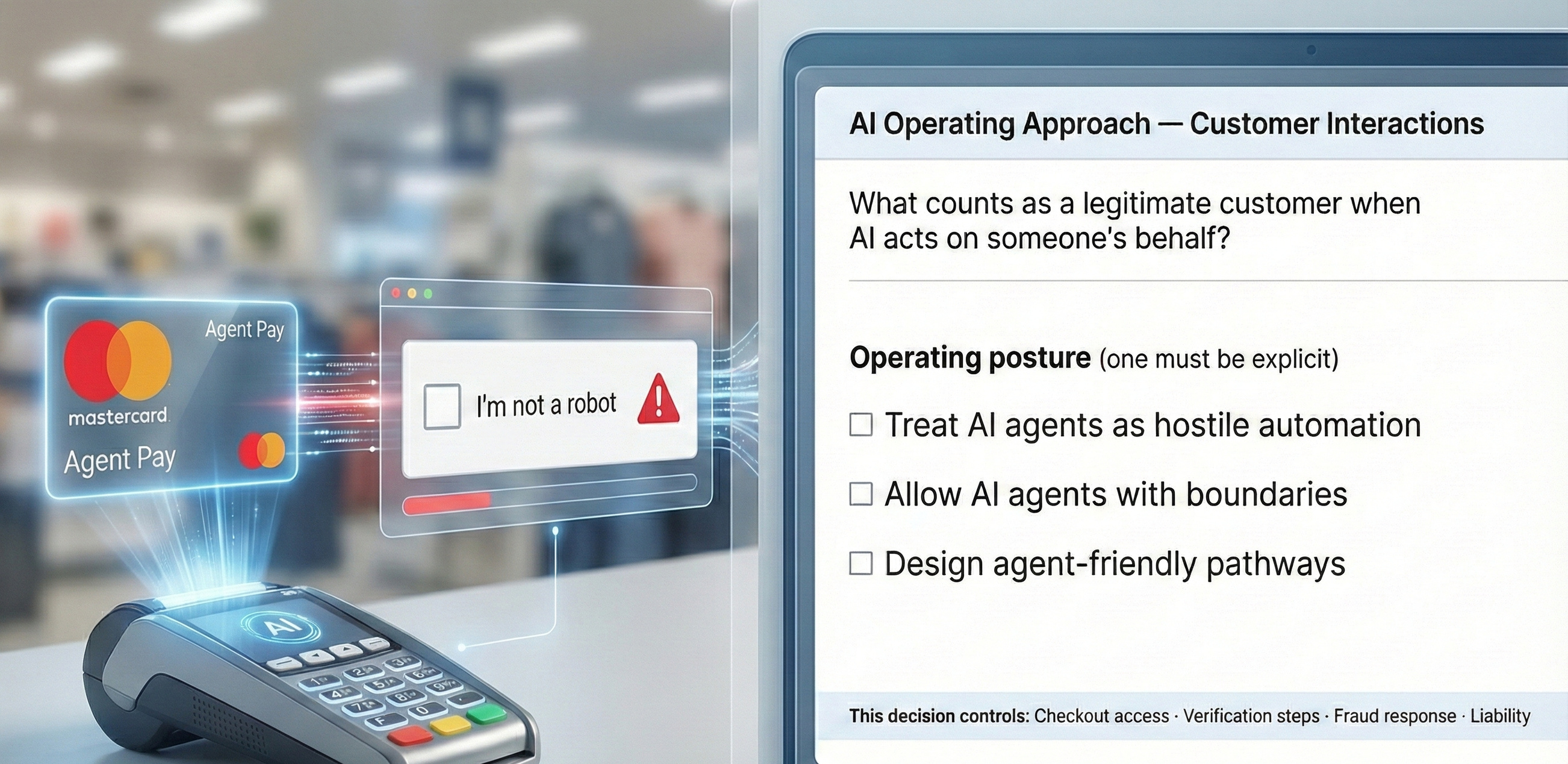

In the past week, Australian retailers have started openly pushing back against the arrival of AI shopping agents, heralded by Mastercard’s agentic payment. They’re being blocked as bots, triggering verification walls and breaking checkouts in the process.

The pattern described in this piece, “AI shopping arrives in Australia — retailers revolt”, looks like a familiar story about security and fraud prevention. Except that this is not a security problem. It’s an operating decision problem.

The story will repeat in the coming week’s and month’s. Organisational systems will make that decision by default and retailers will increasingly lose customers – something that happens when organisations never make an explicit decision about how AI is allowed to participate in their business.

I’m not a robot

As AI-powered shopping agents – tools that search, compare, and even attempt to transact on a customer’s behalf – begin to appear in Australia, many retail sites are responding by blocking them outright. Checkouts fail, verification walls go up and purchases don’t go through.

From the retailer’s perspective, this can look like a sensible security response. From the customer’s perspective, it feels like something else entirely: I tried to buy something, and the company treated me like a robot!

The decision most organisations haven’t made

The pattern underneath these failures is surprisingly consistent: a simple assumption that the “customer” is a human, typing and clicking directly. Retailers have sophisticated fraud controls, bot detection and human verification mechanisms. AI shopping agents break that assumption.

The implicit decision organisations are making is this: We can keep our existing definitions of “legitimate user” and still capture future customers. That decision is rarely stated out loud, owned or revisited as a deliberate choice – until real customers start bouncing.

This isn’t a tech failure. It’s an operating choice failure.

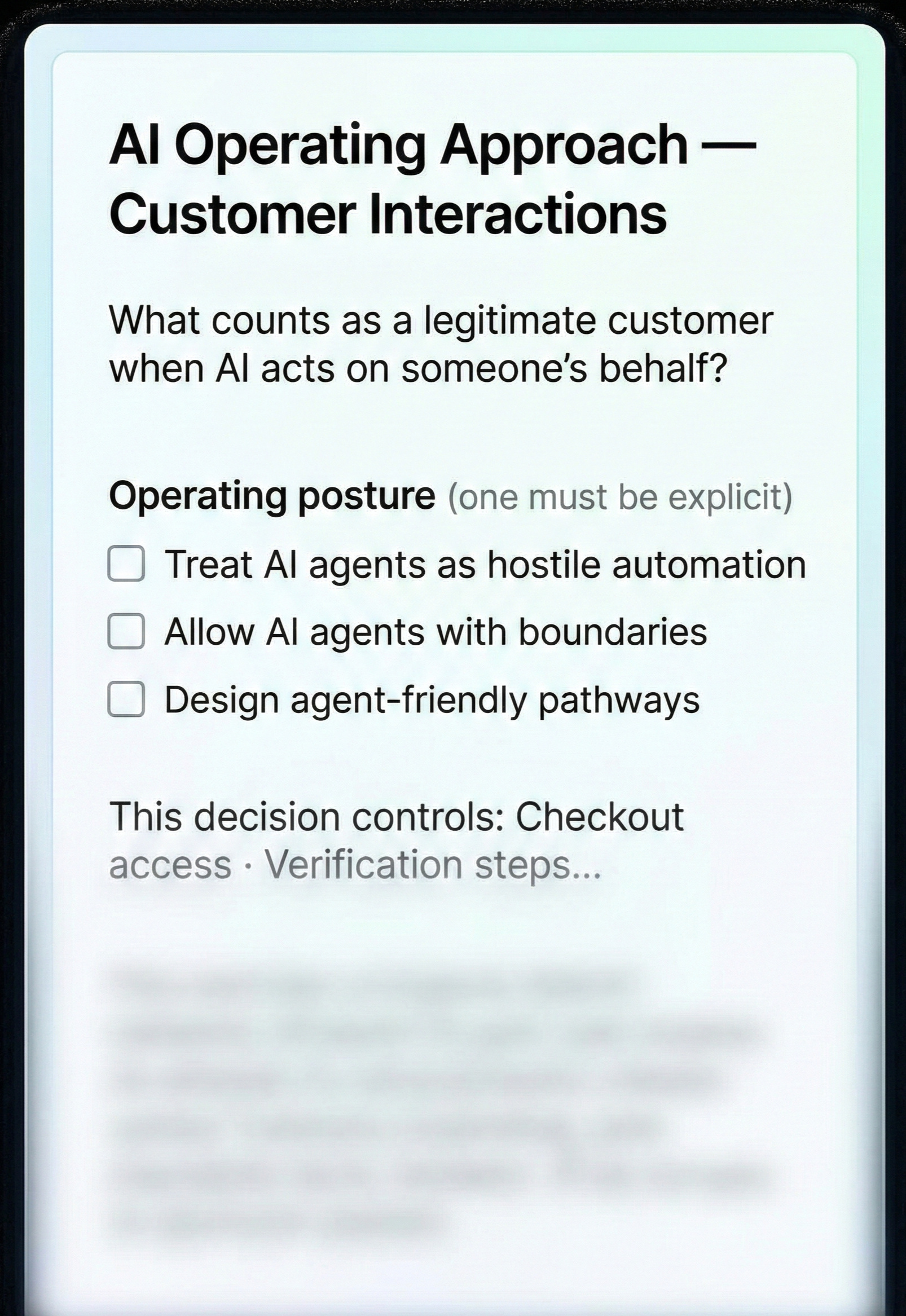

At the core, this is an operating question:

How do we want AI agents to show up in our business?

As hostile automation to be blocked?

As semi-legitimate actors that require extra checks?

As legitimate participants in commerce, with their own designed pathways?

Most organisations haven’t made that decision. So the default position – of security – quietly becomes the decision.

A familiar pattern, just in a new place

Over the past few weeks, we’ve looked at very different AI stories: The Washington Post publishing AI-generated podcasts, legal filings relying on inaccurate AI citations, Jeanswest experimenting with AI-generated creative.

But the same underlying pattern: AI output crossed a boundary where it mattered – legally, reputationally, commercially – without anyone first deciding how that boundary should work.

What experienced operators do differently

Experienced operators force a simple conversation early:

Are we isolating this use of AI, keeping it tightly contained?

Are we allowing it conditionally, with explicit checks and limits?

Or are we integrating it deliberately, designing new pathways around it?

They decide on how AI fits into their operating model and they rewire the process so the choice holds under the scale and speed of AI. That’s why the decision has to exist as a completed, signable artefact: a named owner, explicit boundaries, and a system of record the organisation can point to when it matters.

The missing artefact

A short, explicit operating decision that answers:

What counts as a legitimate AI-assisted customer interaction?

What is allowed today – and what is not?

What constraints matter most (fraud, liability, trust)?

Who owns the decision?

And what happens if it goes wrong?

You can think of it as a decision record for how AI is allowed to participate – in ecommerce, hiring, publishing or any workflow where outcomes matter.

Artefact: AI Operating Approach Decision Canvas

“I’m not a robot” is becoming the wrong question

For over twenty years we’ve designed the internet around a simple assumption: the customer is a human at a keyboard. Increasingly, the interaction will be mediated by an agent acting on their behalf. Ecommerce is colliding with the future using operating assumptions from the past.

That’s why this isn’t a security story. It’s an operating-choice story. And it’s the same story we’ve been watching all month. Week 1 was a media brand discovering that “review” isn’t validation. Week 2 was a legal firm discovering that “AI assistance” isn’t verification. Week 3 was a retailer discovering that “experimentation” isn’t brand representation. Week 4 is commerce discovering that “bot defence” isn’t the same as vetting a real customer in an AI-mediated world.

These are consequential – requiring deliberate decisions recorded in a signable artefact – that makes the decision real, owned, bounded and defensible.

About the author

Vinesh (VP) Karan works with organisations on AIOPS – Operationalising AI. His work focuses on identifying the decision gates that block progress, diagnosing failure patterns, and converting expert judgement into signable operating artefacts.This article is part of an ongoing series on operationalising AI beyond pilots. If this problem sounds familiar, you can fill out the contact form or DM him on LinkedIn.

Jeanswest’s AI Ads:

Are you experimenting with AI or letting AI experiment with your brand?

Jeanswest recently ran social media advertising that featured AI-generated models and content. It didn’t take long for the backlash to arrive. Customers called it “AI slop”, “garbage”. One of the most telling comments wasn’t even about the aesthetic – it was about governance: I wonder who approved it. That sentence is the whole story.

A lot of commentary has described this kind of AI use as “clumsy”, or in poor taste. And I understand why. But that framing is still too shallow. This isn’t about hallucinations or technical failure. It’s about approving a public-facing representation without first making a controlled decision about what your brand is allowed to be represented as.

When the public starts asking who approved something, the issue is no longer “AI quality” or “creative taste”. It becomes an authority problem. Authority is the moment a business allows output to stand in for it. And that’s why this story is more useful than it looks.

What the 5% do differently

There’s a useful point buried in what high performers consistently show across AI work: top leaders understand how AI can create value for the business, and they align the organisation around that. In this context, “value” isn’t just cost reduction; It’s does this strengthen or weaken how we are perceived?

The 5% don’t refuse experimentation. They refuse to experiment without deciding the boundaries first. They decide, before launch:

what AI is allowed to represent,

what it is not allowed to touch,

and what the minimum quality floor is for public-facing work.

Making their boundaries explicit is why their experiments compound, while others become public lessons.

The trade-off wasn’t reckless. It was unbounded

Many teams are deliberately experimenting with AI creative – to move faster, reduce costs, or signal innovation. That decision isn’t reckless by default. What matters is how that decision is operationalised.

This story shouldn’t be framed as: “They didn’t realise what would happen.” It’s better framed as: They made a conscious trade-off — but didn’t make the trade-off explicit, owned, or signable.

Most organisations don’t explicitly decide the trade-off. They just act, then explain later. They ship “as a test”, hoping the risk is temporary, the backlash mild, and the clean-up manageable. For small brands, sometimes it is. However, for larger ones, the reputational footprint can linger.

The failure pattern: “We’ll try it and see how it lands.”

The recurring failure pattern I see across this kind of AI deployment is:

“We’ll try it and see how it lands.”

It sounds reasonable. Even modern. But it assumes something that is rarely true in public-facing work: that if the output crosses the line, someone will catch it in time, and the organisation can recover without cost.

In practice, “we’ll see how it lands” isn’t neutral. It’s implicit risk acceptance without sign-off. It’s letting the public become your quality assurance function. And when it goes wrong, organisations scramble for a line that sounds reassuring: “AI is just one tool in our creative workflow” or “we’re committed to authenticity.” Maybe. But the public doesn’t experience your workflow. They experience the output — and what it implies about your standards.

How experienced operators see it

An expert in this space wouldn’t lead with: “How do we want to use AI in this campaign?” They would lead with: How do we want our brand represented in the wild?

Because once you start there, the tools, including AI, become subordinate. They’re evaluated for whether they help you create value towards the representation you want, not whether they are cheaper, faster, or novel.

This is what’s really being approved when a campaign like this goes live:

This output represents us.

This meets our brand standard.

This level of quality is acceptable to customers.

And, implicitly, this is the kind of authenticity we are comfortable projecting.

Those are not “creative decisions”. They are authority decisions. The backlash, in plain terms, isn’t against AI. It’s against the absence of judgement.

The Brief that would have made this safe

If you want to operationalise AI experimentation without accidentally turning your brand into a test environment, you need a brief that answers one simple question:

Given how we want our brand to be represented,

how does AI create value for our business?

And that brief must specify the artefact that must accompany the campaign to prove compliance to the bounded decision. That’s what makes the decision explicit even for “low-stakes” work.

The artefact that makes the difference

This is where most organisations stay fuzzy. They try to solve the problem with vibes: taste, authenticity, “we’ll be careful”. The 5% produce evidence, an artefact.

In this case, the missing artefact is a Brand Authority Sign-off – a claims/release decision record that travels with the campaign for approval. Not a long policy or a compliance exercise, just a short signable object that answers:

What are we approving this to represent about the brand?

What is the declared quality bar?

What would trigger a pause or rollback?

Who is accountable for the call?

That artefact is how you demonstrate that the decision was bounded, that the trade-off was explicit, and that someone can stand behind it.

A note on “undetectable AI” (and why it makes this more urgent)

One line in the coverage stuck out to me: the suggestion that over time, AI content may become so authentic that it becomes undetectable. But I don’t think that’s the real question.

Whether AI is detectable or not is irrelevant. Because here’s the uncomfortable part: the problem doesn’t get wished away the day AI becomes undetectable. It gets worse. At least today, people can still call out misalignment with your brand. When AI is undetectable, your brand can be dictated by AI and no one even realises that’s what’s happening.

(As an aside: yes, I’m writing this article with the assistance of AI and other tools, but as tools to help me say what I want to say, rather than letting AI say what it wants to say.)

The contrast to notice

Most teams:

experiment by intent,

learn via backlash,

and retroactively explain the decision.

The 5% treat AI claims and representations as controlled content, not creative copy. They bound the risk before they ship, and they leave behind evidence that the decision was made.

That’s the whole point of this Jeanswest signal.

About the author

Vinesh Prasad works with organisations on AIOPS – Operationalising AI. His work focuses on identifying the decision gates that block progress, diagnosing failure patterns, and converting expert judgement into signable operating artefacts.This article is part of an ongoing series on operationalising AI beyond pilots. If this problem sounds familiar, you can fill out the contact form or DM him on LinkedIn.

20 Jan 2026

The Case of AI-Assisted Court Filings

and the Missing Verification Evidence Behind the Disaster

A South Australian solicitor and two Victorian barristers were referred to their state regulators after a court document was found to contain inaccurate and misleading references to case law. The judgment described it as AI “hallucinations”. It also said the extent and way AI was used “remains opaque”. And the court ordered a further $10,000 as costs “thrown away correcting the errors generated by AI.”

It’s the kind of story that travels fast because it triggers two public reactions:

AI made things up, again!

How could the lawyers have approved it?

If you’re in the first camp – that “AI made things up” – you’ll miss the real lesson, and you’ll repeat it in your own organisation, just in a different form. Because this is not an AI problem.

Experienced operators see something else: It’s a decision approval problem. An output crossed an authority boundary without owned verification evidence, and everyone behaved as if that was acceptable. That mental model gap is where most AI use goes wrong.

That AI can generate believable nonsense is clear. But that’s not the interesting bit. The interesting bit is that AI output entered one of the highest-integrity workflows, a court submission, without an explicitly owned verification standard that matched the risk. That’s not “a careless worker”. That’s a broken accountability model.

The missing object

An Approval Pack. You won’t witness a signature without sighting an ID. You shouldn’t accept an output for a high-integrity workflow without traceable verification evidence: a document where the preparer attests that the references are valid. You can’t approve or submit without it. Such a document should be contained in the Approval Pack.

An Approval Pack – or validation pack, sign-off bundle, decision pack – is the evidence-and-steps bundle that makes a decision safe to act on. This is required in ‘most’ traditional high-integrity workflows (witnessing a signature) and should be required anywhere AI output can cross an authority line. It’s what turns “we think this is fine” into “we can stand behind this.”

The failure pattern

The judgment noted that the extent and way AI was used “remains opaque”, and that the solicitor said she didn’t use AI herself, but a paralegal did. The focus is on what training, supervision, or guidance the paralegal had been given regarding AI use. But that ignores a key failure pattern here: the belief that “review will catch it”.

The key insight: Review is not a substitute for verification. And verification does not occur if the requirement for verification ownership didn’t exist in advance.

The AI tool has its own workflow, a probabilistic workflow. But the court does not care who typed the prompts. It cares who filed the document. And that’s the point enterprise leaders need to absorb too: when an output carries your organisation’s authority – legal, financial, public, regulatory – you don’t get to outsource the verification standard to “someone else’s workflow”.

The pattern is consistent: the failure isn’t capability, it’s approval. It’s the moment an organisation gives something permission to operate. Instead of “We’ll just be careful” or “Someone will review it in the end”, the key question ought to be: Under what conditions can AI-assisted output be treated as authoritative enough to act on?

The practice of the 5%

“Rewiring business processes” is a practice of high performers in AI, according to an analysis by McKinsey. The relevant change in process here is to define the preconditions for authority: before something can be filed, published, sent, signed, or relied upon, a set of checks must be satisfied, and someone is named as accountable for those checks.

The missing artefact? A Citations & Authority Verification Checklist – part of an Approval Pack for AI-assisted legal drafting. The checklist contains:

What AI is allowed to do in this workflow (and what it’s not)

What must be verified before any claim crosses the authority line

Who is the operator-of-record (the person who signs the verification)

How each citation/authority is verified and recorded (so it’s evidence, not plausibility)

Even the court pointed to broader risks beyond incorrect citations including privacy, subpoena material, and privilege-waiver risks from entering draft documents into AI programs. High-integrity work can’t run on plausibility. The judgement must be made visible.

The practical end-state: moving forward

When this happens, organisations often respond with either:

a ban (temporary relief, long-term avoidance)

a reminder email (box ticking, quickly ignored)

“we’re reviewing our processes” (true, but usually too vague to change behaviour)

The mature move is different. A short, signable intervention that converts the lesson into an Approval Pack and a Brief operating standard that people can actually follow.

That’s the shift from probabilistic AI output to deterministic business operations.

The flaw in the AI model is not relevant. What’s relevant is that AI has removed the friction that would have historically called for a pause in the workflow because something looks off. Without that, workflows keep moving forward until an external authority catches it. Sadly, in this case, it happened to be the Court.

So ask yourself:

Where in your organisation are you currently relying on “review” to do the job of an explicit decision?

About the author

Vinesh Prasad works with leaders and teams on AIOPS (AI Operations) – making AI outputs safe to rely on in real workflows. He surfaces the decision gates and failure patterns that cause harm, then turns judgement into signable approval artefacts (“approval packs”) that make action safe. He teaches strategy and consulting at UNSW Business School.

If this feels familiar, you can fill out the contact form or DM him on LinkedIn.

13 Jan 2026

Human Validation & Trust in AI –

The Missing Decision at The Washington Post

The Washington Post launched a new feature in its mobile app called Your Personal Podcast. It was pitched as a personalised audio briefing: two AI hosts, your preferred topics, your reading history, and a tidy little “catch me up” experience.Then reality hit.Journalists inside the Post (and people watching from the outside) started flagging issues that aren’t “minor errors” in a newsroom context: misattributed quotes, invented quotes, and commentary creeping into what should be straightforward reporting.Reporting suggested the Post had internal testing that found a large share of scripts failing its own standards, but it launched anyway, aiming to 'iterate through the remaining issues'.This is not a story of AI models getting it wrong. It’s a story of how a use-case is mis-operationalised.This is not an AI problem

I am not suggesting that AI models are foolproof or model owners do not have responsibility. Rather, if you decide to use it, there are things you can do to ‘bound the risk’.An AI pilot can seem like magic. The production environment is way less forgiving. This is the essence of the pilot → production trap.In the case of The Post, the question isn’t “why did the AI make mistakes?”. The question is:Why did a major institution put an AI voice in front of the public, wearing the Post’s credibility, when the output behaved like an intern with confidence and no supervision.The decision gate

A newsroom is basically a trust factory. So if the trust standard isn’t explicit, you don’t have a product problem; you have a credibility incident waiting to happen. In this case, it looks like the trust standard wasn’t just unclear, it was bypassed.Human Validation & Trust is one of the key decision gates teams must pass before AI can safely operate in the real world. In plain terms, this gate is where teams get stuck (or should get stuck) on one hard question:“What do we trust this thing to do?”The failure pattern

Here’s the failure pattern I see in the facts reported:

“Beta” as false risk reduction: calling something a beta can be fine in product development. But in journalism, “beta” doesn’t reduce the reputational radius. The output still sounds like the Post.

Wrong-class errors treated as quality issues: Invented quotes and misattributions are not “quality issues.” They are truth violations.

Institutional voice crossed without an operating contract: Once an AI host is narrating your reporting, it’s no longer a back-office productivity tool. It’s performing the organisation in public.

The Key Lesson: Why AI Fails at the Decision Gate

A pilot is permission to learn. Production is permission to operate.My work focuses on one recurring problem I see across organisations: AI initiatives rarely fail because the models are weak (tools are what they are at any point in human history), but because the deterministic decisions required for production are delayed, blurred, or avoided altogether. Over time, I’ve learned that the fastest way to unblock these initiatives is not better prompts or more pilots, but through a simple but disciplined output: a signable operating Brief.The Washington Post story is a public version of what happens inside enterprises every day:

The pilot proves it can generate plausible output. “Getting to the demo stage wasn’t difficult…”

Everyone gets excited.

Then it touches the real workflow. “...but the trickier part was refining the podcast.”

And suddenly the real questions appear:

Who owns the output?

What happens when it’s wrong?

Who has stop-the-line authority?

The best-practice move of the 5%

McKinsey’s 2025 State of AI work makes a useful point about high performers in AI deployment: they are more likely to have defined processes for how and when model outputs need human validation.Human-in-the-loop can’t mean “a Washington Post editor listens to every personalised episode.” This product is explicitly designed as self-serve, “audience of one" experience: users pick topics, hosts, length, and the system stitches stories based on reading/listening history.So what do the decisions of the 5% look like when “review everything” is impossible? Three very ordinary, very human decisions, bound the risk for them:

What is this AI allowed to do, and what is it not allowed to do? (The “job boundary.”)

What level of exposure are we comfortable with while we learn? (The “blast-radius boundary.”)

What is our validation standard when things go wrong? (The “supervision boundary.”)

The diagnostic artefacts

This is where pilots quietly deceive teams. A pilot can feel “successful” because the room is friendly, the scope is narrow, and everyone treats mistakes as learning.Production is different. Production needs artefacts, because artefacts are how you bound risk and make the supervision decision legible.If I diagnose this through the Human Validation & Trust gate, the “missing artefacts” are the ones that force the organisation to answer, in writing: “What is our trust standard for this class of output?”. The key artefact is the Human Validation Standard which asks:

What can the AI do without review?

What is the “acceptable accuracy” for each content type?

What disables publishing immediately?

These artefacts don’t “solve” the problem. They make the real decision unavoidable before customers and journalists force it for you.Operationalisation – Move work forward

The 5% treat trust like an operating contract, something that can be signed. The diagnosis is turned into a contract: The Brief.The Brief is how you convert a probabilistic pilot into a deterministic business decision.It isn’t a bigger model, or a better prompt. The Brief locks in the decisions you’re otherwise avoiding:

What the AI is for (and what it is not for).

What autonomy class it’s in (assist vs speak/represent).

What the validation standard is when you cannot review every output.

Who owns the standard day-to-day.

What evidence you will track (so trust doesn’t become opinion).

What triggers a rollback or pause.

That is the institutionalisation step: If you can sign that brief, you can operate. If you can’t sign it, you’ve learned something essential: you’re not ready for production, not because AI is “bad,” but because the organisation hasn’t made the deterministic decisions that production demands.And that’s the core lesson the Washington Post story makes visible.

About the author

Vinesh Prasad works with leaders and teams on AIOPS (AI Operations) – making AI outputs safe to rely on in real workflows. He surfaces the decision gates and failure patterns that cause harm, then turns judgement into signable approval artefacts (“approval packs”) that make action safe. He teaches strategy and consulting at UNSW Business School.

If this feels familiar, you can fill out the contact form or DM him on LinkedIn.